Bazaarly - A Thought Exercise - Universe B

AI can speed up coding, but architecture and organisational design determine whether that speed translates into actual delivery throughput.

This is a continuation of a thought exercise I started in my previous post. Before proceeding with this part, please read the first part, which you can find here.

Standard disclaimer: the views and opinions expressed here are my own only and in no way represent the views, positions or opinions – expressed or implied – of my employer (present and past). Bazaarly, Best Bazaar, and all companies, teams, people, and scenarios described in this post are entirely fictional. Any resemblance to real companies, organisations, products, or events is purely coincidental.

Bazaarly - Universe B

Now, suppose we have a different universe, part of our “Architecture Multiverse” like in those Marvel movies, which was created because Bazaarly made different choices when designing the architecture.

Virtual Tours Stored with RE Team

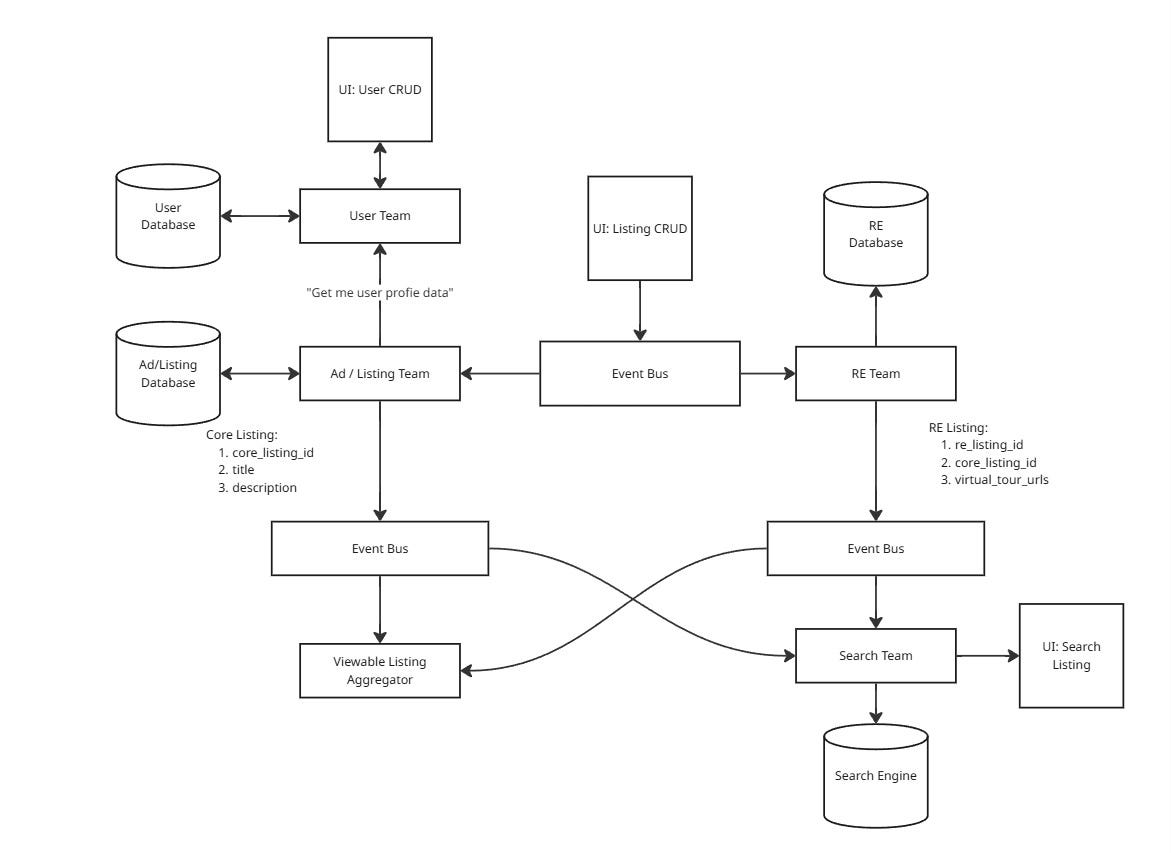

Instead of storing the virtual tour attributes in the Listing database, both teams agreed to store them in a separate database within the RE Team. The general idea underpinning this approach was that a Listing could have multiple views/projections based on who was using/owning them. A Listing could be viewed as an object having some core attributes (“Core Listing”), and “something else on top”. The core listing contained the attributes that were common across all listings - id, title, descriptions, etc. For the RE team, the “something else” part equates to the virtual tour attributes, which augmented the core listing and created the “Real Estate Listings” projection.

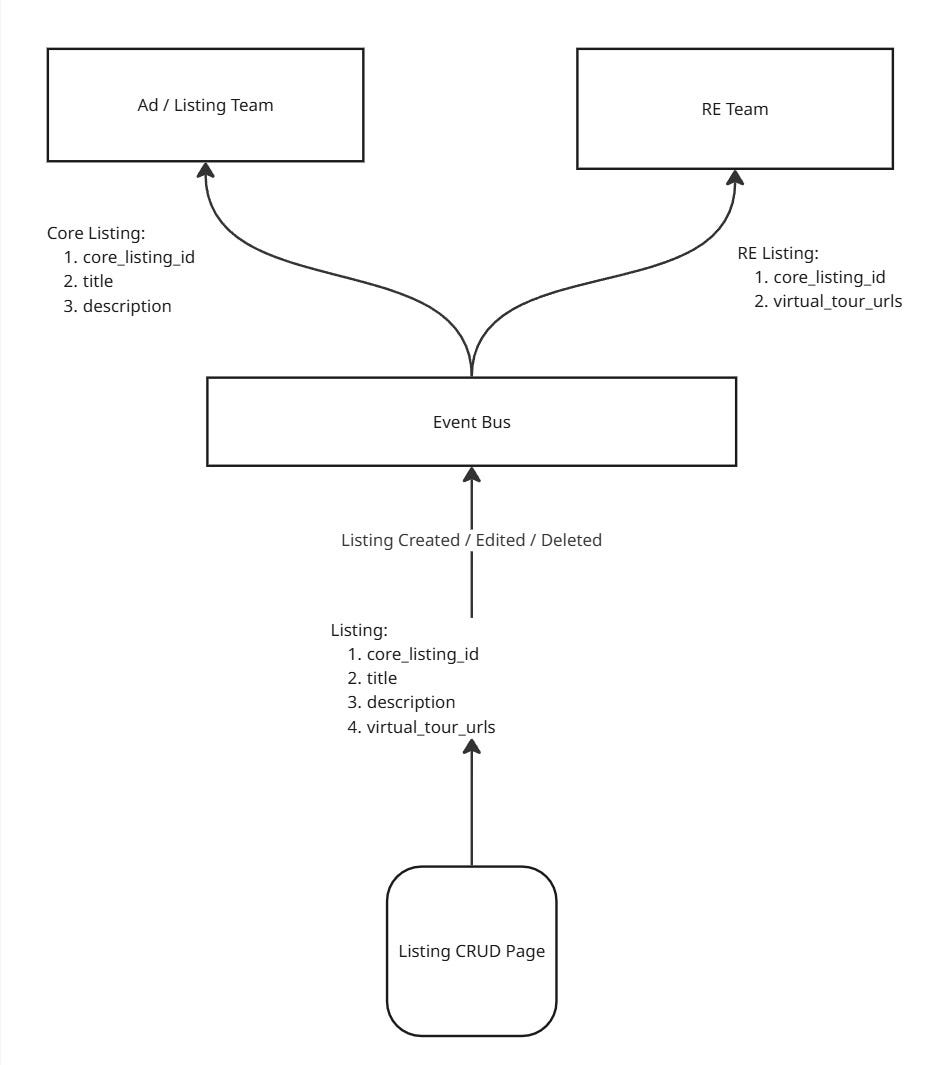

The teams decided that the Create/Update Listing Page would aggregate both core listing and real estate listing attributes in one place so that the sellers get a consistent CRUD experience, while in the backend, the attributes would be distributed to teams based on domain ownership. When a seller created/edited a listing, a “listing published/edited” event containing all the given attributes would be published to an event bus/queue/kafka topic, and teams who were interested in those attributes would consume the attributes they were the owners of.

When rendering the page, the teams decided to leverage Micro-frontends: the Listing Team still owned the frontend, but they were composing the final page with the RE microfrontend, which was being developed by the RE Team.

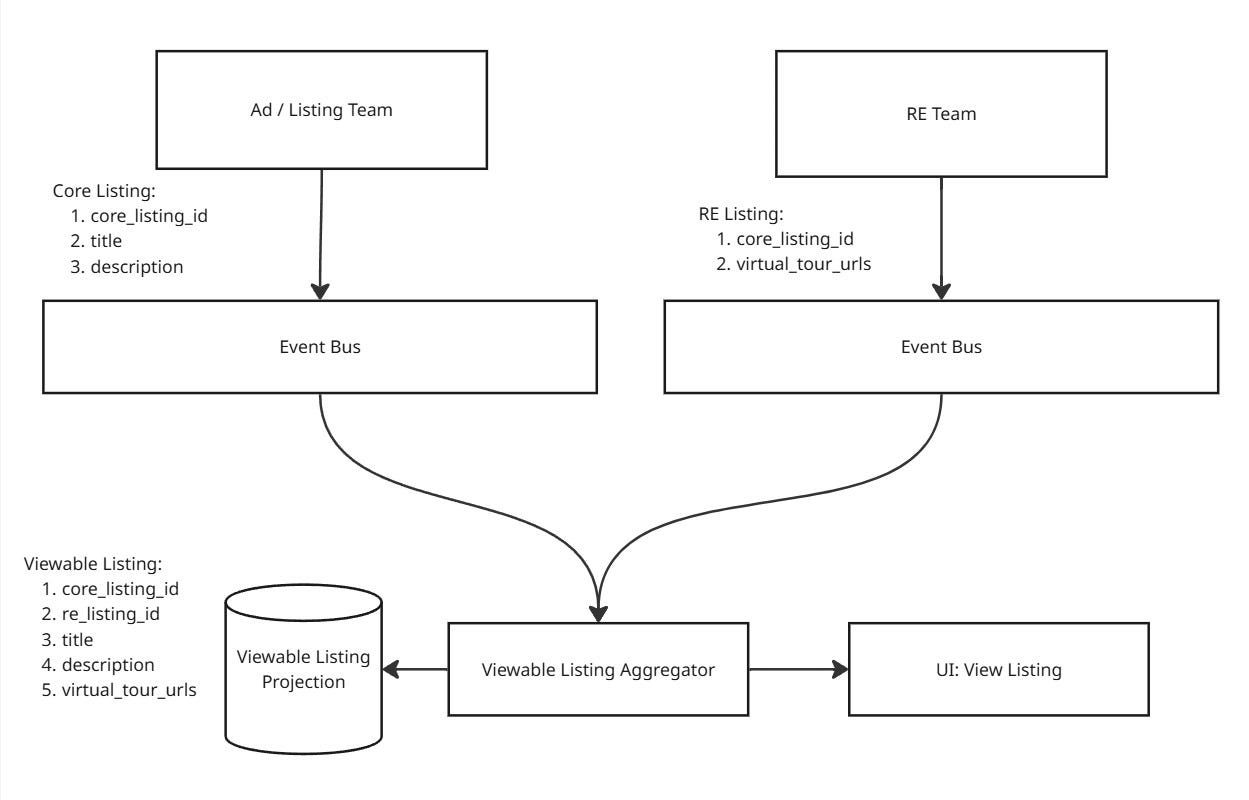

In order to render the View Listing page, the teams decided to create a projection - Viewable Listing - that was the result of aggregating listing data from different teams. The projection was also pre-computed, and every time any of the source listing/projection was updated, they would get an event and thus update the computed projection.

The team felt this also reduced the likelihood of tail latency amplification, as they won’t have to perform synchronous calls when rendering a latency-critical page. Even though the resultant architecture is now more complex, they felt it was worth it because every 100 milliseconds of latency increase would directly impact Bazaarly’s revenue.

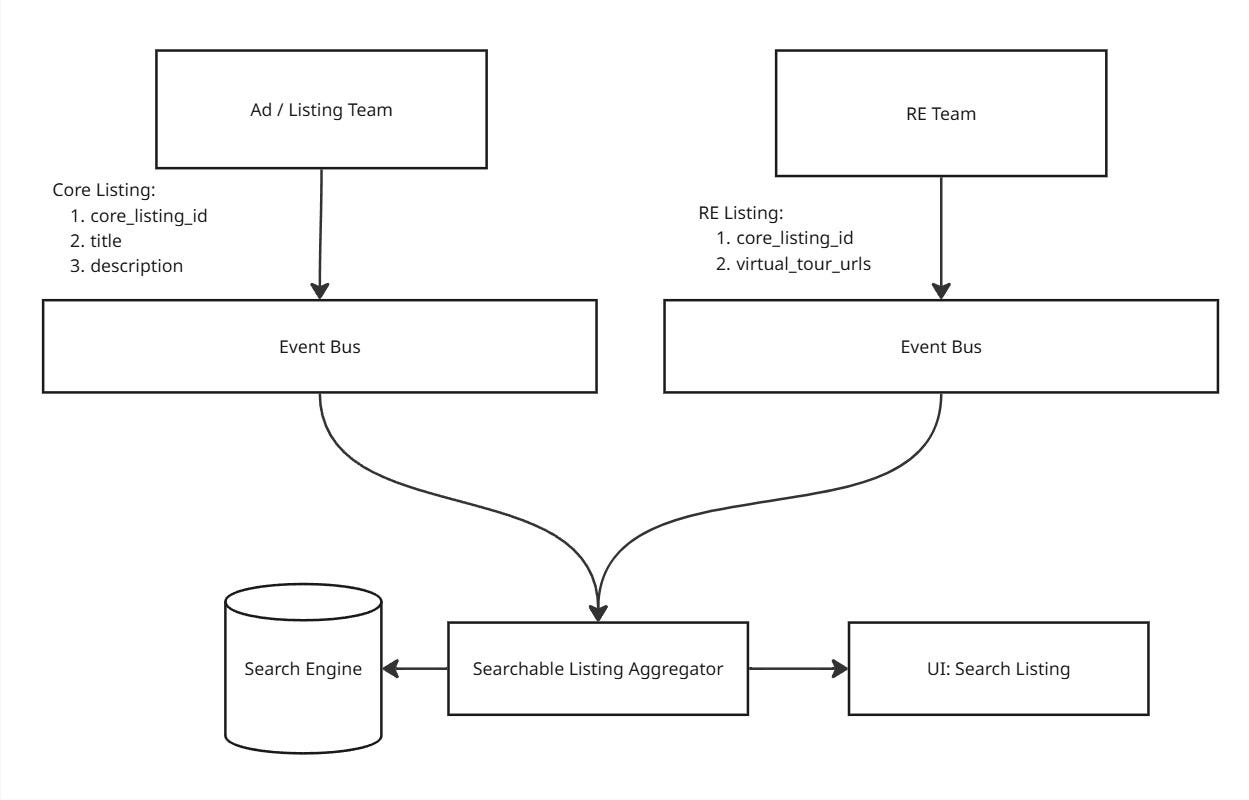

Similarly, the Search Team was now also aggregating listing data from different sources and creating a different projection - Searchable Listing - with the necessary data.

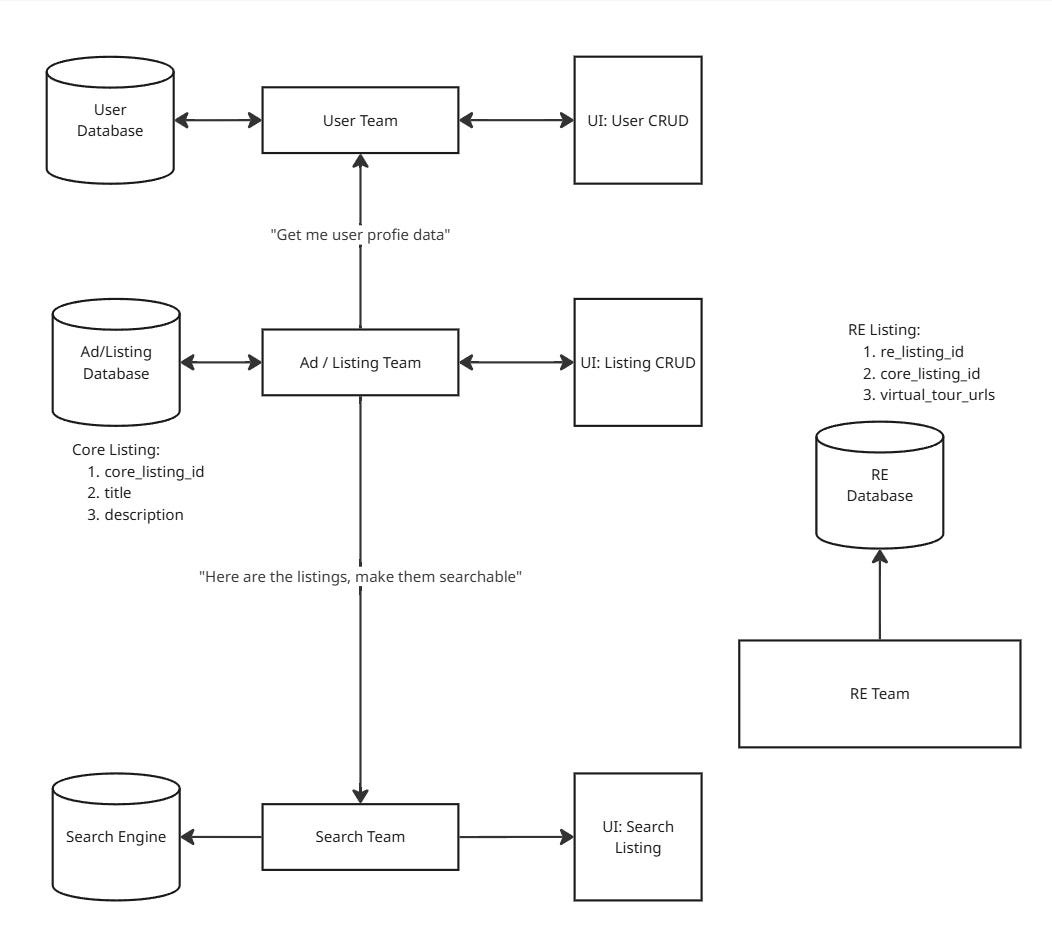

After all things had been put in their place, this is what the final architecture looked like:

Putting the infrastructures in place and implementing them took around one and a half months of time, because they now had to provision more infrastructures before implementing the feature.

This architecture differed from Universe A in many ways. One of the primary differences was that Universe A had a single-writer listing model, enforcing strong consistency across all the listing views at the same time. Whereas this universe relied more heavily on asynchronously updated projections at read-time, so different consumers had to tolerate the staleness of data and other anomalies. However, Bazaarly folks also knew that Starbucks Does Not Use Two-Phase Commit and yet was able to sell a lot of coffee, so they found it to be a reasonable model of how the real world works.

Monetising Virtual Tours

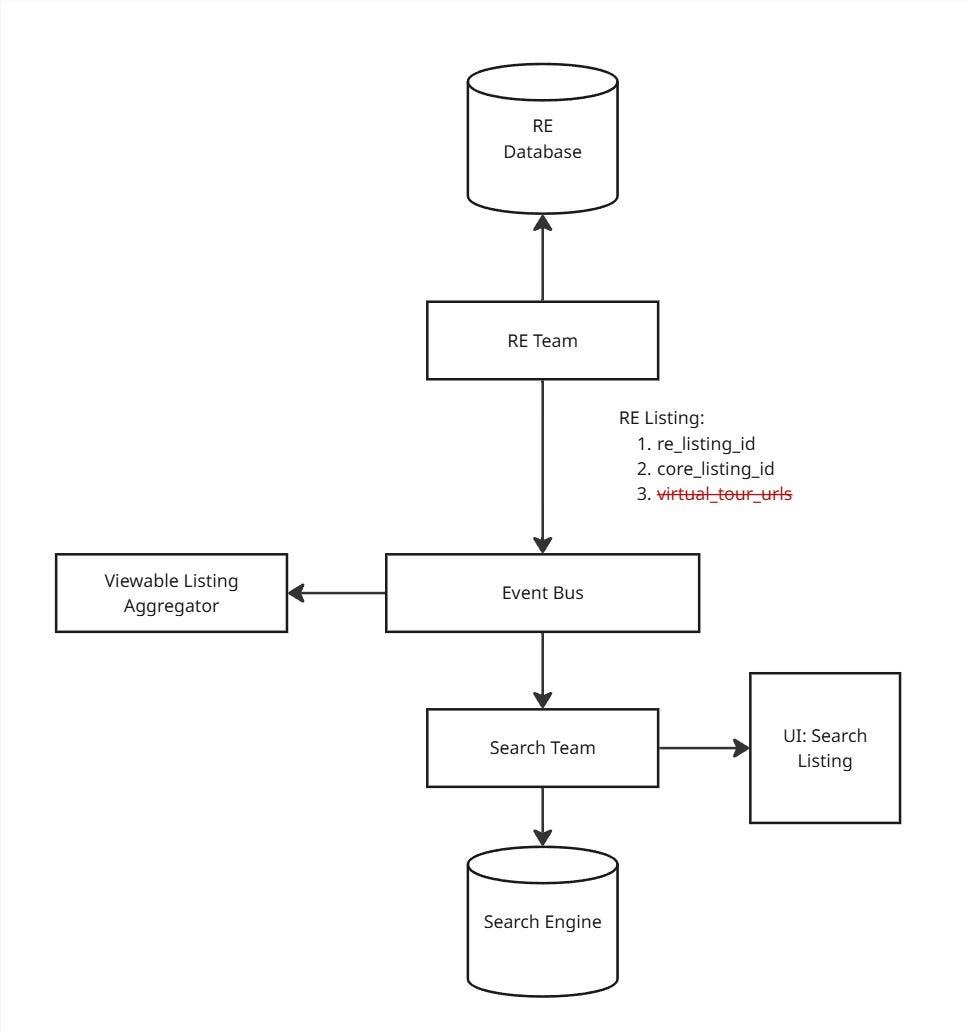

Now, when it came to the monetisation of the virtual tours, the RE team implemented the entire subscription feature on their side. Then, they decided that when a subscription runs out, they are going to send an update via the event bus, where they will simply omit all virtual-tour-related attributes. When they were missing, clients would simply not display them in the frontends, effectively disabling the feature.

Since the changes were entirely on their side, it did not need any coordination between teams at all. They were simply done with this change in a couple of days and celebrated the release with a Cookie and Cake Party!

Extending Listing Expiry Date for Real Estate Listings

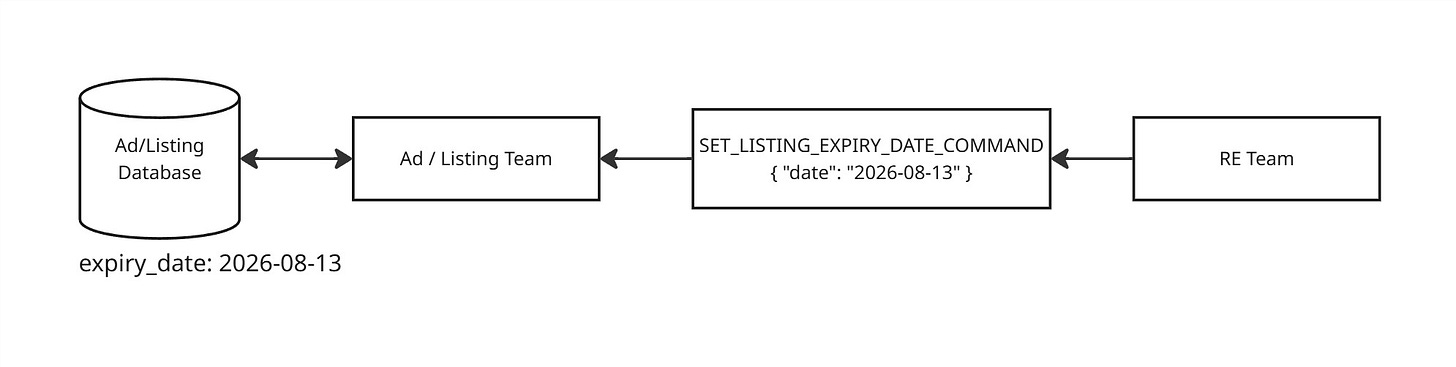

The teams followed a similar asynchronous approach when implementing the change: instead of the Listing Team performing an API call to check if a seller gets a two-year lifecycle for their RE listings, they would listen for a command from the RE team containing an expiry date for the listing. The Listing Team would trust the date set in the command, and would not add any additional checks and/or logic in this area.

Monetizing Listing Expiry Via Better Subscription Differentiation

Because of the architectural decision the Listing Team and the RE Team took when extending the RE listing lifecycle, the dependency between the Listing Team and the RE Team was now inverted - the Listing Team became data-dependent on the RE Team because they were now the authoritative source of the RE listing expiry date. Because of this choice, RE Team was now able to offer multiple subscription products with different expiry dates, all by themselves, catering to different groups of sellers.

Challenging Time Ahead: A New Competitor Comes to Town!

In order to counter the Best Bazaar threat, the real estate team introduced further differentiations of their subscription tiers, added additional attributes, etc., quite quickly. They were responding to all business requests quite fast because almost all the requests could be implemented solely by working on the code/part of the architecture that was under their full ownership.

Questions to Readers

Here are some questions for the patient readers on this universe, which are exactly the same as the previous post:

What were the major steps needed in this universe to get a feature from ideation to production?

Which teams were needed to do work that the RE Team could not complete themselves to ship features (i.e., virtual tours, extended lifecycle)?

What was the implicit optimisation goal the Listing Team and the RE Team were thinking of when they decided to introduce virtual tour attributes in the Listing domain?

What was the primary cost introduced by the optimisation goal mentioned in question 3, and where did it show up in the feature lead time?

What would happen if Bazaarly started spinning up more specialised category teams? What if they decided to go the other way round - merge verticalized teams back into one? How would these reorganisations affect the feature lead time?

Suppose Bazaarly started using AI tools and, as a result, cut implementation and debugging time by 50%. In addition, they had access to AI-powered monitoring and observability tools, which made understanding complex production systems 50% easier. What would happen to the end-to-end feature lead time in this case?

What if the AI tools increased the speed of writing code by 3x? Would Bazaarly produce 3x more features in the same amount of time?

Epilogue: Match the Pipes

I am a big fan of Kent Beck’s Tidy First blog. A few days ago, he wrote a post that explained the Theory of Constraints beautifully. Here is the post.

Once you have read it, here are two bonus questions for you:

If we apply the Theory of Constraints to our thought exercise, then what was the most restrictive part of the system in Universe A and Universe B that was hampering output?

In which universe would Bazaarly be able to truly leverage the power of AI to boost productivity? Why? What would the other universe need to do to gain the same efficiency boost?